What 22 CTOs, CIOs, product managers and architects told us in structured interviews. Just the problem space as they described it.

Why this post exists

Over the past weeks we ran 22 completed interviews with leaders, product managers, architects and CTOs. The sample spans organisations from 6-person scale-ups to 2000-person enterprises, across financial services, healthcare, logistics, energy, telecom, public sector and manufacturing.

We used a fixed interview protocol based on The Mom Test: ask about the last concrete time something happened, not about hypothetical opinions. Roughly 30 minutes per interview, recorded and transcribed. Names and companies are kept out of this write-up on purpose, so people stay comfortable telling us hard things next time.

This post summarises what came up most. It also flags where we expected a pattern and did not find one. Nulls are findings too.

Nothing below is about any product. It is about the problem space.

1. Documentation drifts from reality at scale

This was the single most recurring theme among leaders at medium and large organisations. Roughly 15 of 22 interviewees raised it without being prompted, usually with a specific recent example.

One enterprise architect at a large bank named three distinct causes in the same breath: developers interpret requirements differently from what was written, changes during implementation never get documented, and later bug fixes silently rewrite behaviour without touching the spec. They observed that the gap widens the longer a project lives and the more projects build on top of it.

A senior executive at an entertainment company put it bluntly: documentation is one of the worst things in software development because it always lags reality, and eventually nobody looks at it anymore.

A CIO at a mid-sized healthcare group reported the extreme end: the code itself is the only documentation. The user manual is maintained by business staff, not by IT.

A CTO at an industrial manufacturer described a concrete case where backend, hardware and software teams had to collaborate, one person wrote the Confluence page, and the others disagreed with how it was phrased. A dispute not about facts, but about which version counted as true.

A product manager describing a 3-year, multi-tens-of-millions payment project said new team members had to rediscover the current state by asking around, because documentation was scattered across systems and nobody knew where the authoritative version lived.

When we asked leaders to physically show us the original spec of their last shipped feature and confirm it matched reality, zero out of twenty-two could do so. Either they couldn't locate it, or they found it and confirmed it was stale. That is a clean unanimous result.

The important qualifier: this is a scale problem, not a universal one. The small, high-context teams we spoke to (a 6-person engineering team at a fintech, a small team at a developer-tools company, and a small AI team) explicitly did not report documentation drift as current pain. Their reasoning was consistent: with 6 to 15 people in the room, the humans carry the consistency in their heads. Commit history plus a ticket tracker is enough. In small teams this problem does not hurt.

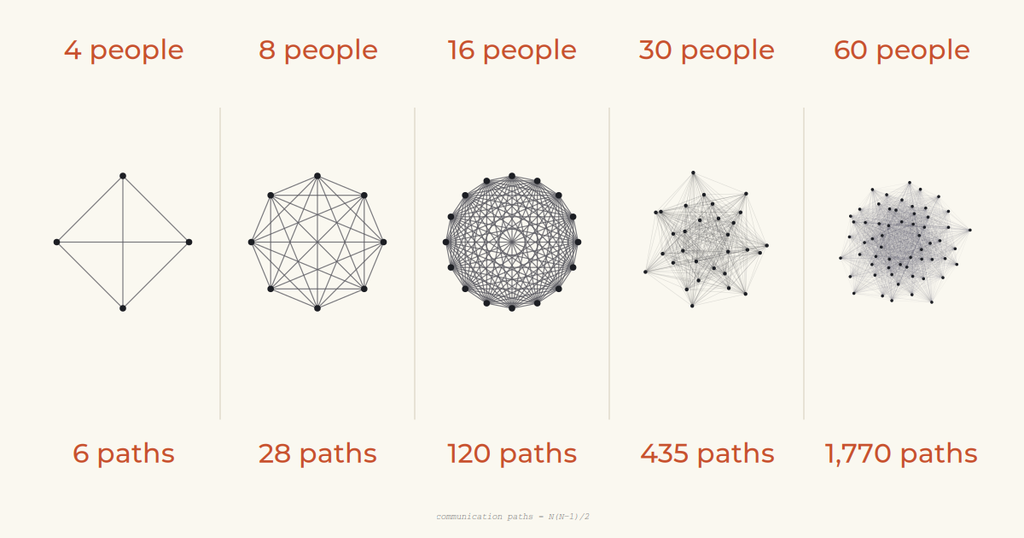

The physics of communication are the story here. With N people, the number of communication paths is N(N-1)/2. At 6 people that is 15 paths, manageable. At 80 engineers it is 3160. No human-in-the-loop process holds up against that kind of growth, and documentation stops being a nice-to-have and becomes the only mechanism left. The transition from "we all just know" to "nobody has the full picture" happens somewhere between 20 and 50 people, and it happens quietly.

2. A large share of rework traces back to bad initial scoping

A former CEO of a European logistics operator, now advising, stated it as a rule of thumb from 20 years of CEO experience: 80 percent of project problems originate in insufficient scoping before the project starts. Teams jump to solutions before agreeing on what the problem actually is.

Their example: a real-time goods-tracking project across Europe was driven by IT and customer service without the right operational people in the room. The team focused on UI screens while the real complexity sat in the operational data behind them. The project was stopped and restarted from scratch.

A product manager leading an advanced analytics team at a transport operator gave the clearest concrete number we got: a train energy-consumption model was built on the wrong data source. It took about two months to notice and seven months to redo. One project, seven months of rework, because one assumption was wrong at the start.

A senior executive at an entertainment company described a separate project for an appointment-planning application. The underlying training data did not match the target market. They only noticed during investor demos. The contract was ultimately terminated.

A CIO at a healthcare group identified a structural variant: business stakeholders submit solutions rather than problem statements, so features get built that don't address the underlying issue. They lack a business analyst to sit between business and IT and sharpen the requirement.

What nobody could give us: a percentage of their actual software budget spent on rework. We asked every interviewee. Everyone answered with adjectives, "a lot," "significant," "too much." Nobody pulled a number from a real budget. For the single most financially consequential question we asked, the data does not exist inside these organisations. That is itself a finding.

3. Decisions don't survive contact with time

If scoping failures are hard to quantify in budget terms, decision failures are even harder, because they rarely show up in any ledger at all.

A former senior leader at a large insurer gave the cleanest example. A team agreed on an eligibility criterion (10 employees versus 5) during a product definition phase. Four months later, during implementation, the decision was reopened because the documentation of who decided what and why wasn't clear. They noted a second pattern: when new managers joined, previous decisions were challenged because the historical context had evaporated.

A digital partner manager at a public sector organisation described the same dynamic: decisions get revisited in subsequent meetings because nothing was formalised. Personality and political pressure from partners influence which version of the decision wins the second time around.

A CIO described a more operational version: 80 tickets sitting in validation status with nobody having time to review them, while test reports get generated retroactively for audits.

Across interviews, the pattern was consistent. Decisions are made verbally or in chat, occasionally captured in a document, and then slowly drift as people leave, new people join, or implementation surfaces edge cases the original decision never addressed. The underlying question, who decided this, when, and on what basis, cannot reliably be answered three months later.

4. "Lost in translation" between layers is real, and it compounds with team size

This came up in roughly 10 of 22 interviews, usually in combination with theme 3.

One interviewee described the requirements chain at a large insurer: functional analyst hands to requirements engineer hands to business architect. Context leaks at every handoff. By the time the information reaches the developer, the original intent has been reinterpreted three times.

A product manager at a public sector organisation identified the primary pain as the business-IT split itself. Delivered work routinely doesn't match initial expectations. Not because developers are careless, but because the translation path between business intent and technical execution has too many steps and no enforcement.

A CTO at an industrial manufacturer made a forward-looking observation worth paraphrasing: even if you give every developer a coding assistant, the alignment problem between developers and product management remains. Their hypothesis: if the coding bottleneck disappears, the new bottleneck becomes how much context one human can hold across parallel workstreams.

An architect working in a regulated biotech environment made the sharpest structural point. In large organisations with unclear objectives and slow feedback loops, alignment meetings are necessary. In organisations with immediate feedback (their example was high-frequency trading firms) alignment meetings essentially don't exist. Alignment overhead is a symptom of slow feedback, not an independent problem.

5. Cross-artefact consistency is invisible work until it isn't

Theme 1 is about documentation going stale against code. This theme is about something different: keeping the chain of artefacts consistent with each other. Vision matches PRD. PRD matches ticket. Ticket matches design. Design matches what was built. Each link separately might be fine; the chain as a whole usually is not.

A former CEO in medical imaging said it plainly: consistency in documentation is far more important than people think. They cited end-of-life product management specifically. In medical imaging there is a 7-year mandatory spare-parts support window, and without consistent documentation across product generations, migration paths for customers become impossible to construct.

An enterprise architect at a large bank described how the organisation uses templates, SharePoint process descriptions, Confluence, GitHub and PowerPoint, all for different documentation layers, none automatically consistent with each other. Business process modelling in particular is not standardised: every team does it differently.

Another interviewee referred to Confluence as "a graveyard." Documents exist but are never checked for consistency against each other or against what was actually built.

Smaller teams we spoke to did not report this as current pain either, for the same reason as theme 1: the chain is short enough that one or two humans hold it in their heads.

What we expected to find and didn't

We went into these interviews with four hypotheses. Two held up cleanly. Two did not.

Held up: documentation drifts at scale (theme 1), and the wrong thing gets built because of poor upstream scoping (theme 2).

Did not hold up as stated: our assumption that alignment meetings consume 15+ hours per week per person. We asked every interviewee to walk through last week's calendar. Nobody produced a number that supported this. The honest answer was closer to the biotech architect's reframing: alignment time is a function of feedback loop speed, and 15 hours is neither a floor nor a ceiling. It is a made-up number that happens to sound plausible.

Did not hold up as stated: our assumption that tools like Jira and Confluence "store but don't enforce." Leaders don't experience the tools as the problem. They experience the gap between the tools and reality as the problem. The tools themselves are doing what they were designed to do: passive storage. The missing layer is upstream of the tool, not inside it.

Why this matters more now (AI changes the bottleneck)

One additional reason we think this problem space is becoming more urgent: as coding gets cheaper and faster with tools like Claude Code and other AI copilots, execution capacity increases, but the upstream alignment problem does not go away.

If a team can ship code 2× faster, then the cost of ambiguous specs, reopened decisions, and cross-artefact inconsistency compounds faster too: you can build the wrong thing at higher throughput. In other words, AI can shift the bottleneck from “writing code” to “keeping intent, decisions, and artefacts consistent over time.”

That makes the foundational questions even more operational:

- What is the current agreed intent?

- Who decided, when, and why?

- Where is the source of truth, and how do downstream artefacts stay consistent with it?

What none of this tells us

A few things we explicitly cannot claim from this dataset:

- We have no quantified budget impact. As noted above, nobody produced a real number.

- We have no evidence these problems are unique to mid-sized regulated companies. The sample skews that way on purpose; that is who we were looking for. Silicon Valley scale-ups might have different pain, or the same pain with different names.

- We have no evidence any current tool makes these problems worse. We heard frustration with tool sprawl, but nobody traced rework directly to a tool choice.

- Small teams do not experience these problems. This is worth stating clearly. Documentation drift, lost-in-translation, and cross-artefact inconsistency all appear to be consequences of team-size physics rather than of software development as a discipline. Below a certain headcount, humans in the room fill the gap. Above it, no amount of discipline seems sufficient.

A closing thought for leaders

We are continuing these interviews. If the patterns above resonate with what you're seeing, we'd like to hear your concrete examples. Specifically: your last shipped feature, your last reopened decision, your last piece of rework. That is the data that is genuinely missing from the public conversation about software delivery in regulated enterprises.

And the single most useful diagnostic we can offer, based on this research, is this: try to show someone the original spec of your last shipped feature and confirm it matches what's in production. If you can't, and the odds from our sample are roughly 22 out of 22 that you can't, you are living inside the problem space this post describes. That alone is worth knowing.

Based on 22 structured interviews conducted between March and April 2026 across organisations in financial services, healthcare, logistics, energy, telecom, public sector and manufacturing. Interviewees included CTOs, CIOs, heads of product, enterprise architects and product managers, with team sizes ranging from 6 to 2000. All quotes paraphrased and anonymised.